A Markov chain is a stochastic process that models systems where the probability of moving to the next state depends only on the current state, not on the sequence of previous events; a principle known as the Markov property. By repeatedly observing state transitions, one can estimate long-run probabilities, consistent with the ideas behind the Law of Large Numbers.

Unlike models that assume complete independence between events, a Markov chain captures conditional dependence, where each event depends on the present state but not the full history. This makes it particularly useful for representing real-world decisions and phenomena that are not entirely independent, such as customer behavior, weather patterns, or market trends; yet still follow measurable probabilistic transitions.

The concept was first introduced and developed by the Russian mathematician Andrey Markov in the early 20th century.

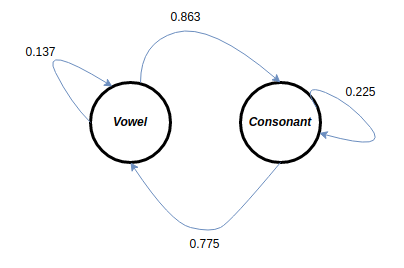

The Vowels and Consonants Experiment (Markov, 1913)

- In 1913, Andrey Markov conducted a statistical experiment on the sequence of vowels and consonants in the text of “Eugene Onegin” by the Russian poet Alexander Pushkin.

- He wanted to challenge the belief that the Law of Large Numbers applied only to independent events (as was commonly assumed then).

- Markov demonstrated that even when events are dependent, as long as they have a certain conditional structure, the Law of Large Numbers can still hold.

| Domain | Example Applications | How Markov chain is used |

|---|---|---|

| NLP | POS tagging, early speech recognition (HMMs) | Models word/phoneme sequences as states; next token depends on current state; emissions generate observed words/sounds. (Wikipedia) |

| Finance | Credit-rating transitions, regime switching | Uses transition matrices for ratings (AAA→AA, …) and market states; multiplies powers of the matrix to get multi-period risks. (Moody’s) |

| Physics/Chemistry | MCMC sampling, molecular simulation | Builds a chain whose stationary distribution equals a target distribution; samples via Metropolis–Hastings. (Wikipedia) |

| AI / ML | Reinforcement learning (MDPs) | Assumes Markov state dynamics; policies and value functions depend only on current state’s transition probabilities and rewards. (Wikipedia) |

| Biology/Genomics | Gene finding, sequence alignment (HMMs) | Nucleotides/protein states follow Markov transitions; hidden states emit observed bases/amino acids. (PMC) |

| Environment | Simple weather forecasting | Weather states (sun/rain/…) transition with fixed probabilities; multi-step forecasts via powers of the transition matrix. (DSpace) |

Key Concepts to know before coding:

Stochastic matrix – a stochastic matrix is a square matrix used to describe the transitions of a Markov chain. Each of its entries is a nonnegative real number representing a probability.

Eigenvector transformation is important because it simplifies complex problems in various fields such as physics, engineering, and computer science. By transforming a matrix into its eigenvectors and eigenvalues, we can diagonalize it, which makes computations easier, especially when raising matrices to powers or solving differential equations. Moreover, eigenvector transformations help in data analysis techniques like Principal Component Analysis (PCA), enabling us to reduce the dimensionality of data while preserving essential characteristics. This leads to more efficient data processing and clearer insights.

Leave a comment